Last updated: May 2026

QUICK ANSWER

This 9-point checklist gives plant heads and IT managers a scoring framework to evaluate any ai video analytics india platform before committing to a pilot — covering architecture, legacy CCTV compatibility, audit-evidence generation, model depth, data residency, and named proof points.

9

Evaluation criteria every buyer needs

<30 Days

Go-live on existing plant CCTV

200+

Pre-trained factory AI models

0

VLAN or firewall changes required

TABLE OF CONTENTS

The plant heads who pick the right ai video analytics india platform in 2026 are not the ones who watch the most demos. They are the ones who walk in with a scoring rubric.

Customer audits have changed the economics. Your Tier-1 OEM no longer accepts “we have an SOP” as compliance evidence. They want timestamped PPE compliance logs — by zone, by shift, exportable in under 24 hours. The manufacturer who can produce that data protects the contract. The one who cannot faces a formal CAPA request and, if unresolved, a contract reallocation.

This guide gives you a 9-point checklist for evaluating any AI video analytics India platform before it goes on your shortlist. Use it to score vendors at your next demo meeting and compress a three-week selection process into a single afternoon.

Already shortlisting vendors?

Book a 30-minute plant-fit walkthrough → — not a generic demo but a session built around your plant type, NVR setup, and audit calendar.

Why 2026 Is the Year Indian Manufacturing Buyers Need a Checklist, Not a Shortlist

For the better part of a decade, video surveillance in Indian manufacturing served one master: the Factories Act 1948. Install cameras in prescribed locations, store footage for the prescribed period, produce recordings on request. The obligation ended there.

That compliance floor still exists. What has changed is the ceiling above it — and the ceiling now has teeth.

Tier-1 OEM customers in automotive, white goods, and electronics have systematised supplier audits with contractual enforcement. A failed OEM audit does not generate a government fine. It generates a Corrective Action and Preventive Action request with a 60-day resolution window and — in cases that do not close — a reduced allocation or a supplier-list requalification. The financial exposure of a single failed OEM audit now exceeds the annual cost of any regulatory penalty most Indian plants would face.

The data these audits demand is specific: PPE compliance records timestamped by work zone and shift, restricted-access intrusion logs, material-handling SOP adherence rates. Compiling this retrospectively from footage libraries requires days of supervisor time. That is audit-risky, not audit-ready.

KEY INSIGHT

Industrial insurance renewals in 2025–26 are increasingly requiring EHS compliance data that mirrors OEM audit expectations. Plants demonstrating continuous automated monitoring are being rewarded with better risk classifications. Those that cannot produce the data are absorbing higher premiums.

Alignment to ISO 45001 occupational health and safety standards now implies video-based evidence, not manual inspection rounds. Any video analytics software india evaluation that skips the audit-evidence question is evaluating the wrong problem. A checklist that scores vendors on this criterion stops the wrong vendors at the door, before they consume three weeks of your procurement calendar.

The 9-Point Evaluation Checklist for an AI video analytics India Platform

Score each criterion 0 (not met), 1 (partially met / claimed but not demonstrated), or 2 (fully met and verified). A vendor below 14/18 should not reach your pilot stage. The editable Google Sheet version is linked in the CTA below.

1. Edge-or-Cloud Architecture: Where Does Inference Actually Run?

This is the first fork in any vendor evaluation. Edge inference means AI processing runs on a compact on-site box wired to your cameras — raw video never leaves the plant. Cloud inference means video streams travel offsite to a vendor’s servers, which creates WAN bandwidth costs, sub-second-to-seconds latency on real-time alerts, and data-residency exposure. For auto, pharma, and chemical plants where production footage contains process IP, edge inference is not a nice-to-have. A vendor who cannot run on-premise should not make your shortlist for a production-floor deployment.

What to ask the vendor

Where does inference run for live PPE detection? Show me the network architecture diagram. What is the WAN bandwidth requirement per camera?

2. Legacy NVR / VMS Compatibility: No Rip-and-Replace

Your plant has existing CCTV — cameras, cable runs, an NVR or VMS, and an IT team that has no appetite for a network overhaul. A capable AI video analytics India platform for manufacturing connects to existing IP cameras via RTSP and integrates with your NVR through ONVIF — the standard for IP camera interoperability. Most camera infrastructure installed in Indian plants in the last 6–8 years is compatible. Any vendor whose proposal step one is “assess and upgrade your NVR” is signalling that their platform was built for greenfield deployments, not the brownfield plants that make up 90% of Indian manufacturing.

What to ask the vendor

Which NVR and VMS brands have you connected to in deployed Indian plants? Can you go live on our cameras without VLAN changes or firewall rules?

3. Audit-Ready Evidence Layer: The Data Your OEM Customer Wants

Detecting a PPE violation in real time is table stakes. Producing a 30-day compliance log — by zone, by shift, by camera — exportable in PDF or CSV within 24 hours — is the audit deliverable. Ask the vendor to open their reporting console during the demo and generate this report for a sample deployment. If they cannot build it live in the demo, they cannot produce it in an audit. Plants that have adopted video analytics software for manufacturing with a proper evidence layer consistently report cutting audit preparation time from 2–3 days to under 2 hours.

What to ask the vendor

Open your reporting console now and show me a 30-day PPE compliance log, by shift, by zone. How do I export it? How long does it take to generate?

4. Pre-Trained Model Library: How Many Use Cases Are Day-One Ready?

Custom model training takes time and money. A wide pre-trained library means you extract value from cameras that are already watching your floor, starting from go-live. A serious platform for Indian manufacturing ships with pre-trained models for PPE compliance (helmets, vests, gloves, goggles), restricted-zone intrusion, tailgating, ANPR, fire and smoke, SOP hand-sequence adherence, and housekeeping non-compliance. A catalogue of 200+ pre-built models means your Phase 1 covers the highest-priority use cases without a single day of custom training. Vendors with catalogues under 50 models will push you into expensive, months-long custom-model engagements for standard use cases.

What to ask the vendor

Show me your full manufacturing model catalogue. Which pre-trained models are relevant to our specific process type? How many are available without custom training?

5. Custom Agent Training Timeline: Days, Not Quarters

Every plant has SOP-specific gestures, machine-specific safety requirements, and product-specific quality checks that no pre-trained model covers. When you need a custom model — a gesture-sequence verification for a specific assembly step, say — how long is start-to-deploy? A mature platform should train and deploy a custom detection agent in days, using your own camera footage as training data. Vendors who quote 8–12 weeks for custom models are outsourcing the work or lack the in-house tooling. Custom-model velocity is a direct proxy for post-deployment responsiveness: when your SOP changes six months in, you need a vendor who can ship the update in days, not schedule a project.

What to ask the vendor

Walk me through your custom model training workflow, start to deploy. How many days? Who labels the training data? Do you do this in-house?

6. Multi-Site Rollout Pattern: One Template, Many Plants

If your organisation operates three or more plants, per-plant deployment effort is the variable that determines whether the programme scales or becomes a permanent IT project. A platform built for multi-site deployment has a templated architecture: once Plant 1 is configured — zones, model assignments, alert routing, dashboards — that configuration replicates to Plants 2, 3, and 4 in weeks, not months. Ask specifically about the multi-site management console. Can a central EHS or operations team manage alerts, update model configurations, and review dashboards across all sites from a single interface? If yes, your EHS team runs the system. If no, the system runs your EHS team.

What to ask the vendor

How do you replicate a deployment from Plant 1 to Plant 2? Show me the multi-site management console. What does central oversight of 4 sites look like in practice?

7. Alert Routing and Escalation: Closed-Loop, Role-Based, Logged

A real-time PPE alert that fires to one supervisor’s phone and disappears unacknowledged is a notification, not a safety system. Proper alert architecture routes based on violation type, zone, shift, and time of day — then escalates automatically if no acknowledgement arrives within a configured window. Every alert, every acknowledgement, and every resolution action is logged. That log is audit evidence. PPE compliance AI India deployments that have moved from notification-only to closed-loop alert management report incident recurrence rates dropping significantly, because accountability is visible and every non-response is on the record. Alert channels should include WhatsApp, SMS, email, and webhook integration with your EHS or CMMS system.

What to ask the vendor

Show me the alert escalation logic. If a restricted-zone breach happens at 3 AM on a Saturday, who gets the alert? What is the escalation chain if there is no acknowledgement?

8. Data Residency and Privacy Posture: On-Prem Inference, Zero Production Data Egress

Production-floor footage contains commercially sensitive material: assembly sequences, process speeds, yield patterns, material handling — data a competitor or a vendor selling to your competitors should not access. On-premise edge inference keeps raw video entirely inside your network. Processed metadata — alert timestamps, compliance percentages, anonymised headcounts — can optionally sync to a cloud dashboard, but the footage never leaves the plant. Cloud-only architectures for edge AI video analytics India manufacturing are appropriate for lower-sensitivity environments. For plants operating under OEM non-disclosure agreements, the legal question is not about Indian regulation — it is about what your OEM customer’s NDA says about third-party access to production data.

What to ask the vendor

Where is raw video stored and processed? What data leaves my plant network? Where are your cloud servers located? Can we operate fully air-gapped with zero cloud dependency?

9. Customer-Grade Proof Points: Named, Verifiable Deployments

Every vendor in the AI video analytics India companies market claims multi-site enterprise deployments. The ones who can name them, provide direct reference contacts, and point to published case studies with measurable outcomes are a far smaller group. Before approving a pilot, ask for three references: one in your sub-industry, one covering a multi-site rollout, and one where the platform’s evidence layer was used in an OEM or ISO audit. Anonymised references are not references. Apply the same due diligence you would to any capital equipment purchase — the evidence is in named, callable deployments. See how the leading AI video analytics India companies in India compare across these criteria.

What to ask the vendor

Give me three customer references in Indian manufacturing — named companies, multi-site deployments, at least one that used your platform to respond to an OEM audit. May I contact them directly?

How to Score a Vendor Against This Checklist

The rubric is straightforward. Score each of the 9 criteria as follows:

| Score | What it means |

|---|---|

| 2 | Fully met — demonstrated live in demo or verified via a reference call |

| 1 | Partially met — claimed but not demonstrated, or met with noted caveats |

| 0 | Not met — vendor cannot demonstrate, deflects the question, or defers to a follow-up |

Maximum score: 18. Use this guide to interpret results:

- 16–18: Strong pilot candidate. Proceed to commercial terms.

- 12–15: Conditional pilot. Name the gaps as explicit pilot acceptance criteria before signing.

- Below 12: Walk away. The gaps are architectural — they will not close during a pilot.

PRO TIP

Score all shortlisted vendors in the same session — same demo script, same questions, same scorer. The divergence is almost always larger than expected, and it converts a three-week vendor-selection debate into a one-hour scorecard review.

FREE RESOURCE

Download the Editable 9-Point Scoring Rubric

Google Sheet format. Pre-loaded with vendor comparison columns, scoring formulas, and the “what to ask” prompts for each criterion. Takes 10 minutes to complete during a demo.

Case Study: How 20 Microns Limited Evaluated Edge AI Against This Checklist

20 Microns Limited is a Vadodara-based specialty minerals manufacturer with multiple production sites across India. When their IT team evaluated AI video analytics India companies for a site visibility and security programme, they started from the position most Indian Tier-2 manufacturers start from: an existing NVR deployment, limited IT bandwidth for an infrastructure overhaul, and a hard requirement that any new solution integrate without disrupting the plant network.

“As an IT Manager, I value solutions that are powerful yet easy to integrate. Agrex.ai provided a scalable Edge-AI solution that has enhanced our site visibility and security without requiring a total overhaul of our legacy systems.”

— Kalpesh Parmar, Manager — IT, 20 Microns Limited

Against the checklist: Criterion 1 (edge architecture) scored 2 — AIVIS runs on an on-site edge box with no WAN bandwidth requirement for core detection. Criterion 2 (legacy NVR compatibility) scored 2 — the platform connected via RTSP to the existing NVR without a camera swap or VLAN change. The IT team made no network configuration modifications.

Phase 1 focused on site visibility and security: restricted-zone access detection, after-hours intrusion alerts, and camera coverage mapping across the site. Go-live came in under 30 days from kickoff.

Phase 2 extended the same edge box to production-floor compliance: PPE adherence logging by zone and shift, operator SOP sequence monitoring, and shift handover documentation. No additional hardware was required — Phase 2 models ran on the same infrastructure as Phase 1.

Multi-site scaling (Criterion 6): The Plant 1 architecture template — zone assignments, model configurations, alert routing, dashboards — was replicated to additional 20 Microns facilities. Each site team received a pre-configured package rather than building from scratch. The IT manager at each site did not need to re-engage the vendor for a bespoke deployment.

The 20 Microns case represents a pattern that appears consistently across Indian Tier-2 manufacturing: existing NVR infrastructure, phased rollout from security to compliance, multi-site scaling from a templated base, and an IT team that retains full control of the plant network throughout.

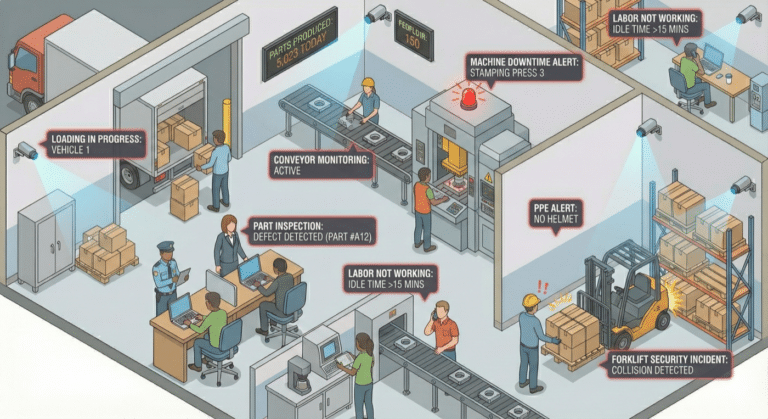

From a Video Monitoring System to an Autonomous Video Analytics Platform

A video monitoring system records what happens. An AI video analytics India platform acts on what happens. The operational gap between those two sentences is measured in hours of incident lag and weeks of audit preparation time.

In a passive video monitoring system, the workflow is linear and retrospective: event occurs → footage is recorded → someone reviews it later → an incident report is filed, if anyone gets to it. The system is a storage medium. Every unit of intelligence in that loop is human, and every human in that loop has three other things to do. Detection-to-action lag in a passive monitoring environment is typically measured in hours on a good day and days when the shift supervisor is busy.

In an autonomous video analytics platform, the loop is closed by the system: event occurs → AI classifies it in under a second → alert routes to the designated responder by violation type, zone, and shift schedule → responder acknowledges → system logs the full chain with timestamps → unacknowledged alerts escalate automatically. No human has to be watching a screen. The accountability chain runs without a supervisor in the room.

For Indian manufacturing, this shift is most consequential in three contexts:

- PPE compliance: A violation on an active production line cannot be addressed after the fact. It needs to be flagged while the worker is still in the zone. Passive monitoring converts PPE compliance into a post-incident documentation exercise. An autonomous platform makes it a live, preventive process.

- Restricted-zone intrusion: Chemical storage, high-voltage enclosures, and live-tooling maintenance zones require immediate response when entered without authorisation. A monitoring system stores evidence of the breach. An autonomous platform triggers the response before the breach becomes an incident.

- Audit evidence: The closed-loop log from an autonomous platform is a ready-made audit deliverable. The footage archive from a passive monitoring system requires manual review to extract equivalent evidence — which is precisely the three-day scramble that OEM audits now expose.

The video analytics services in india that produce genuine operational value have moved the full stack — detection, classification, routing, escalation, logging — into the platform layer. Services that stop at detection and hand the response chain back to humans are selling an upgraded DVR. For a deeper look at security-specific autonomous monitoring architectures, see the guide to security video analytics and how similar principles apply outside the production floor.

Red Flags: When to Walk Away from an AI Video Analytics India Vendor

A vendor scoring below 12 on the checklist is communicating something. Here are the five patterns that consistently signal a platform not ready for Indian manufacturing:

1. “Step 1: upgrade your IT infrastructure.” Any proposal that opens with a server-room requirement, NVR replacement, or VLAN reconfiguration is building a dependency before you have seen a single detection. Mature edge AI platforms deploy on your existing network. Infrastructure upgrade requirements are architectural immaturity, presented as enterprise requirements.

2. Cloud-only architecture for production-floor data. If inference runs on the vendor’s cloud and your production video streams are transiting their network, ask exactly where those servers sit, who has access to the footage, and what your data rights are on termination. Most cloud-only vendor agreements are not written for the IP sensitivity of manufacturing production data. This is a legal and operational risk that surfaces long after contract signing.

3. No named references in your sub-industry. “We serve leading Indian manufacturers” with no names attached is a pitch, not a reference. Before any pilot, ask for named contacts in auto, pharma, electronics, or chemicals — whichever matches your vertical. A vendor with a real track record will facilitate reference calls without hesitation. One who deflects is telling you something about the depth of their deployment base.

4. Custom-model timelines measured in quarters. If a vendor quotes 10–12 weeks to train a custom detection model, their training pipeline is not productised. You will wait months for SOP-specific use cases and pay consulting day rates throughout. Custom model turnaround in days is a non-negotiable for any deployment where the SOP evolves.

5. “We’ll get back to you on the audit log format.” If the vendor cannot open a live audit-evidence report — PPE compliance by zone and shift, exportable, timestamped — during the demo, they do not have one. This is the most common gap among ai video analytics companies that entered the market from a security background and grafted AI detection onto a VMS. Detection without a compliant evidence layer is a security product with a manufacturing pitch deck.

Frequently Asked Questions

What’s the difference between video analytics software and an AI video analytics platform?

Video analytics software typically handles one or two detection tasks as standalone point tools, each with its own dashboard and renewal cycle. An AI video analytics platform unifies multiple use cases — PPE, intrusion, ANPR, SOP compliance, fire detection — under one inference engine, one audit-evidence layer, and one multi-site management console. The practical difference at audit time: a platform generates a consolidated compliance report across all your plants in under 24 hours; a stack of point tools needs a compliance manager and a weekend to produce equivalent output. For a detailed comparison, see the full review of video analytics software for manufacturing India.

How long does AI video analytics India deployment take in an Indian manufacturing plant?

With an edge-AI platform running on existing CCTV infrastructure, a standard single-site go-live is under 30 days. That window covers edge-box installation, camera-zone mapping, model configuration for Phase 1 use cases, alert routing setup, and supervisor training. Multi-site replication — Plant 2 onwards — typically takes 2–3 weeks per site once the Plant 1 architecture template is finalised. Vendors quoting 90+ days for a first deployment are building custom infrastructure rather than deploying a productised platform.

Does AI video analytics work with our existing CCTV cameras?

Yes, for the vast majority of Indian manufacturing installations. Cameras need to output RTSP streams and the NVR or VMS should support ONVIF — specifications met by essentially all IP cameras installed in Indian plants over the last 8 years across brands including Hikvision, Dahua, CP Plus, and Axis. The edge AI box connects to your existing network without VLAN changes or firewall rule modifications. Legacy analogue cameras on a DVR can be bridged with a low-cost HDMI-to-IP encoder, avoiding a full camera replacement except in zones requiring high-resolution fine-detail detection.

What is the typical ROI timeline for video analytics in Indian manufacturing?

Most Indian manufacturing deployments reach payback within 2 quarters. The largest single ROI driver is avoided OEM audit penalties and contract revaluations — particularly for plants on quarterly or biannual OEM audit cycles, where a single failed audit can cost more than the entire platform investment. Secondary drivers include reduction in PPE-related incident costs, lower insurance premiums for plants that can demonstrate continuous compliance monitoring, and 4–6 supervisor-hours per plant per day freed from manual footage review and compliance log compilation.

Is on-premise inference mandatory for manufacturing data in India?

Not legally mandatory under current Indian data protection law, but operationally and contractually prudent for most production-floor deployments. Assembly sequences, process configurations, and yield data visible in production-floor footage are commercially sensitive. On-premise edge inference means raw video never leaves the plant network. The relevant legal question is not about Indian statute — it is about what your OEM customer’s NDA says about third-party access to production data. Most Tier-1 OEM supply agreements would classify production footage as confidential data subject to non-disclosure obligations.

Which AI video analytics companies in India serve manufacturing specifically?

Several companies offer AI video analytics for manufacturing in India, but the shortlist shrinks when you require multi-site edge AI deployment, 200+ pre-trained manufacturing models, and named customer references in your specific sub-industry. Agrex AI’s AIVIS platform is deployed across automotive, pharmaceutical, electronics assembly, and chemical manufacturing plants. Apply the 9-point checklist above to any vendor you evaluate. For a full market comparison, the guide to top AI video analytics companies in India covers 10 vendors across the same evaluation criteria.

Choosing the Right AI Video Analytics India Platform: The Checklist Is the Decision

The 9-point checklist converts a vendor selection process from a features tour into an evidence review. Architecture fit, legacy compatibility, audit evidence capability, model depth, custom-training speed, multi-site scaling, alert routing, data residency, and named proof — these are the criteria that separate an ai video analytics platform built for Indian manufacturing from one that was marketed at it.

Plant heads who use a scoring framework leave demos with a number, not a feeling. They run a shortlist meeting that ends in a pilot decision, not a follow-up committee. The scoring rubric below gives you the tool to do that.

For plants already past evaluation and looking at multi-site rollout, the See AIVIS for Manufacturing page covers the full deployment architecture, ROI model, and multi-site scaling pattern. For Indian retailers evaluating a parallel capability for store compliance and footfall, the same edge AI infrastructure applies — see retail video analytics for the equivalent framework.

NEXT STEP

Score Your Shortlisted Vendors in 10 Minutes

Download the editable 9-point scoring rubric and book a 30-minute plant-fit walkthrough. Bring the rubric to your next vendor demo and leave with a decision, not a to-do list.

ABOUT THE AUTHOR

Dhruv Jearath

Digital Marketing Executive, Agrex AI

Dhruv Jearath leads digital marketing at Agrex AI, specialising in AI-powered video analytics for Indian manufacturing, retail, and logistics. He writes on edge AI deployment, OEM compliance evidence, and industrial surveillance strategy for plant heads and operations teams across India.